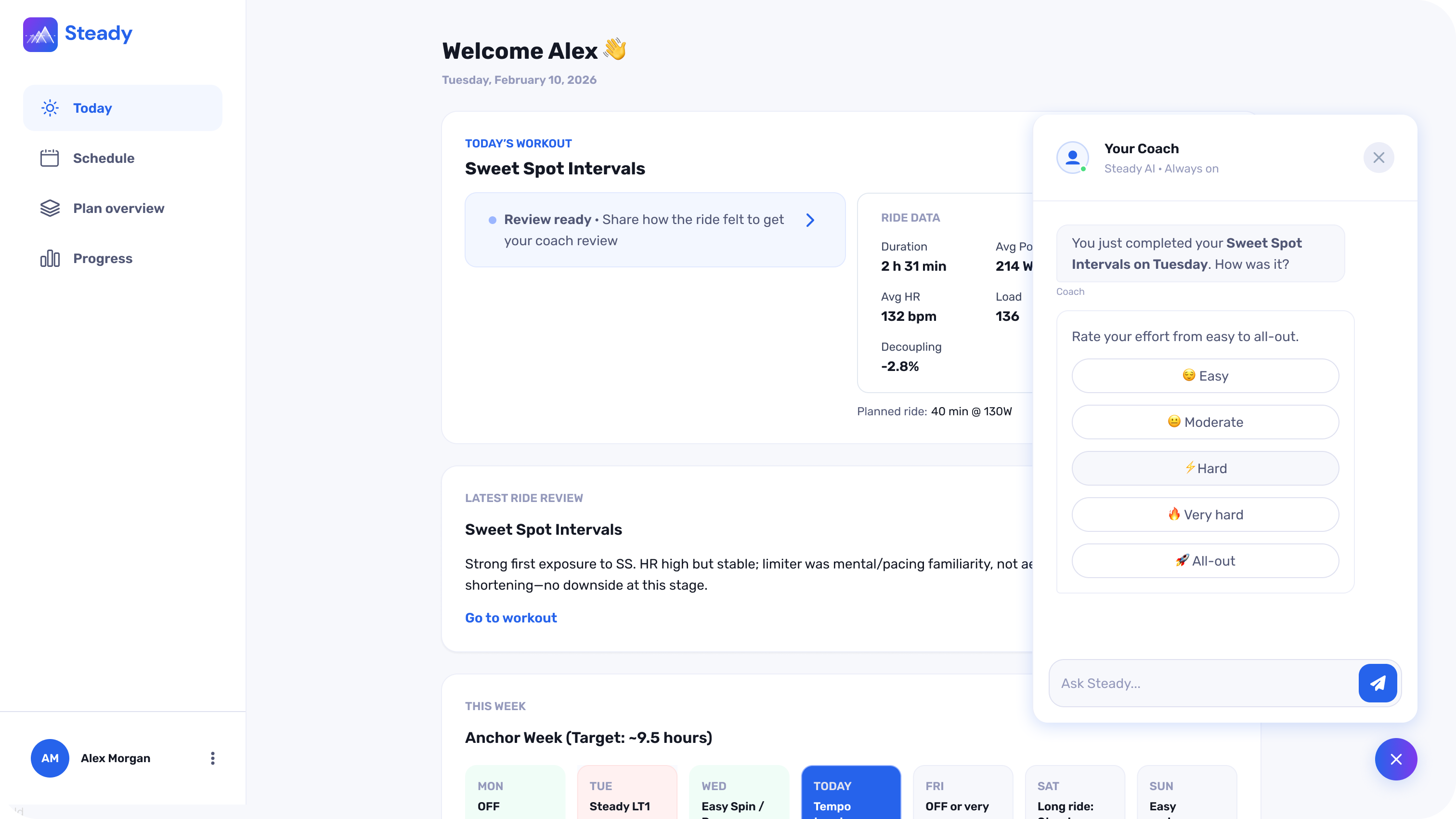

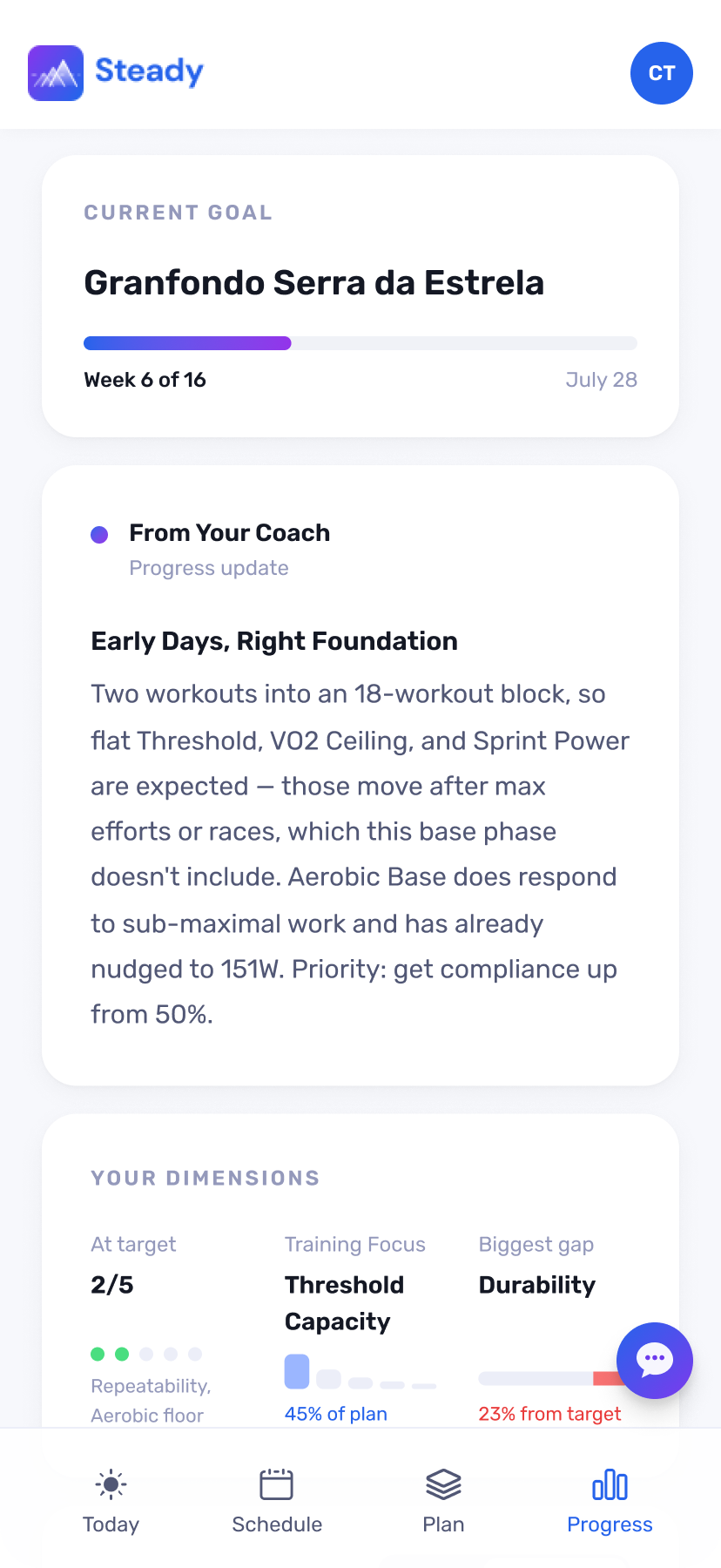

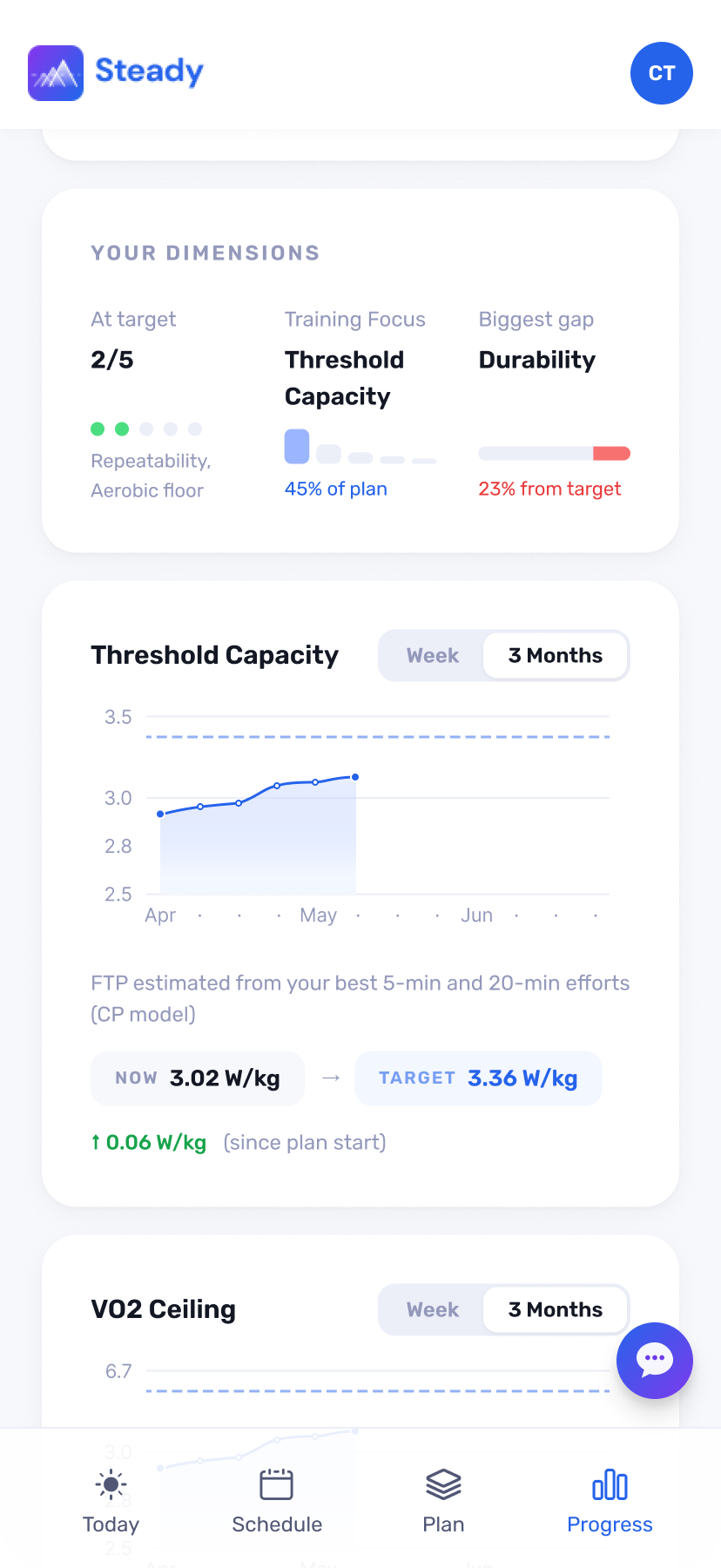

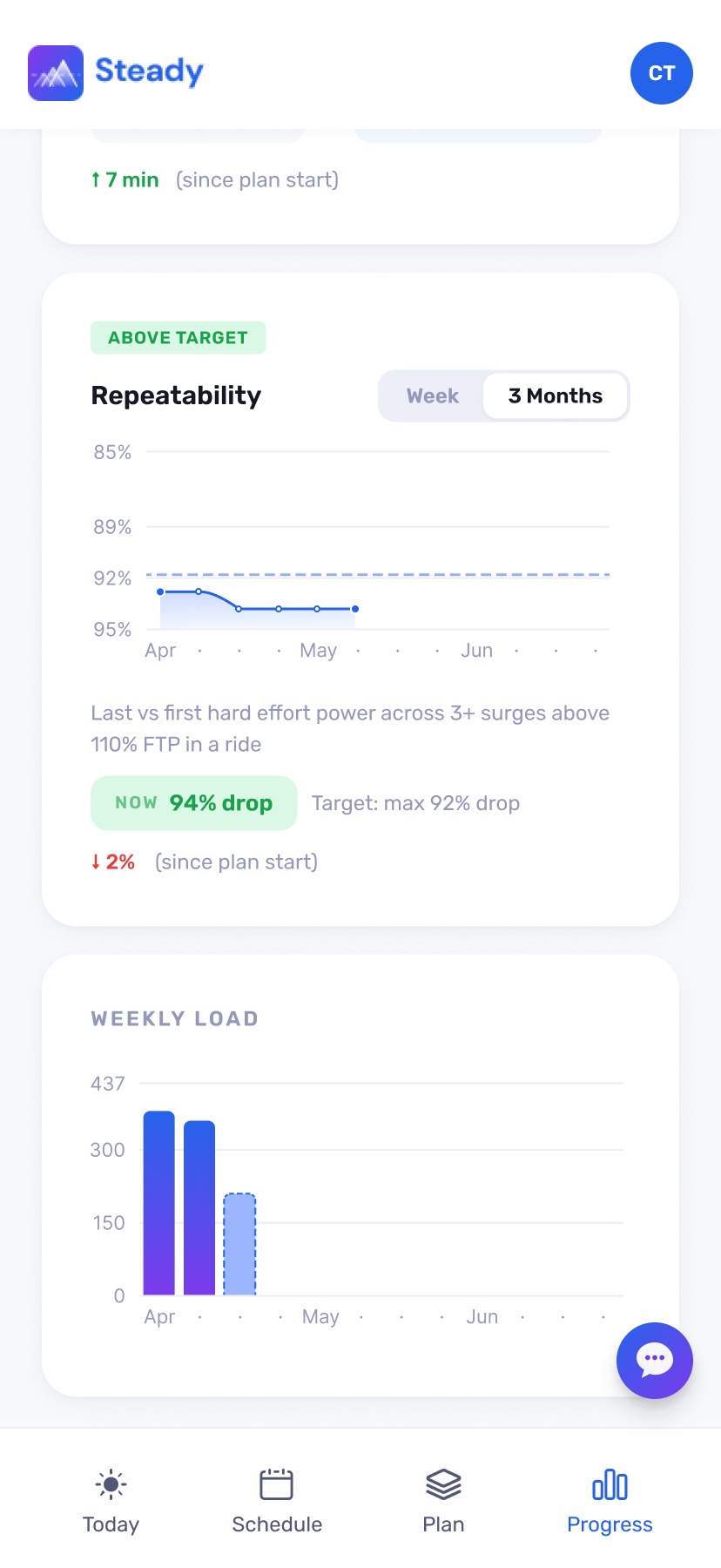

Steady is an AI cycling coaching app for serious cyclists. It builds science-based training plans, reviews every ride, and adapts every week. I co-founded it with my husband — he built the backend, training logic, and cycling science. I designed and built the full product experience.

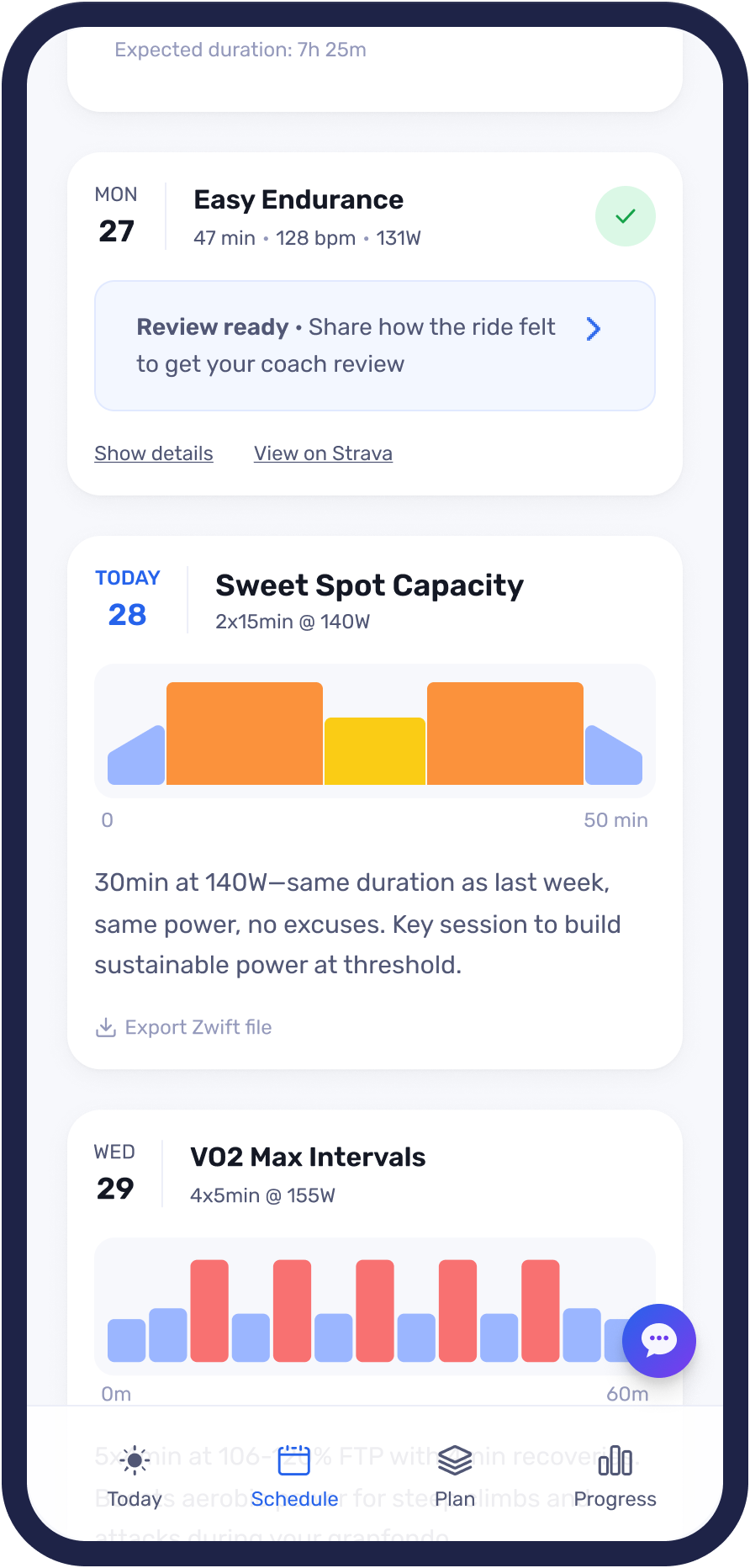

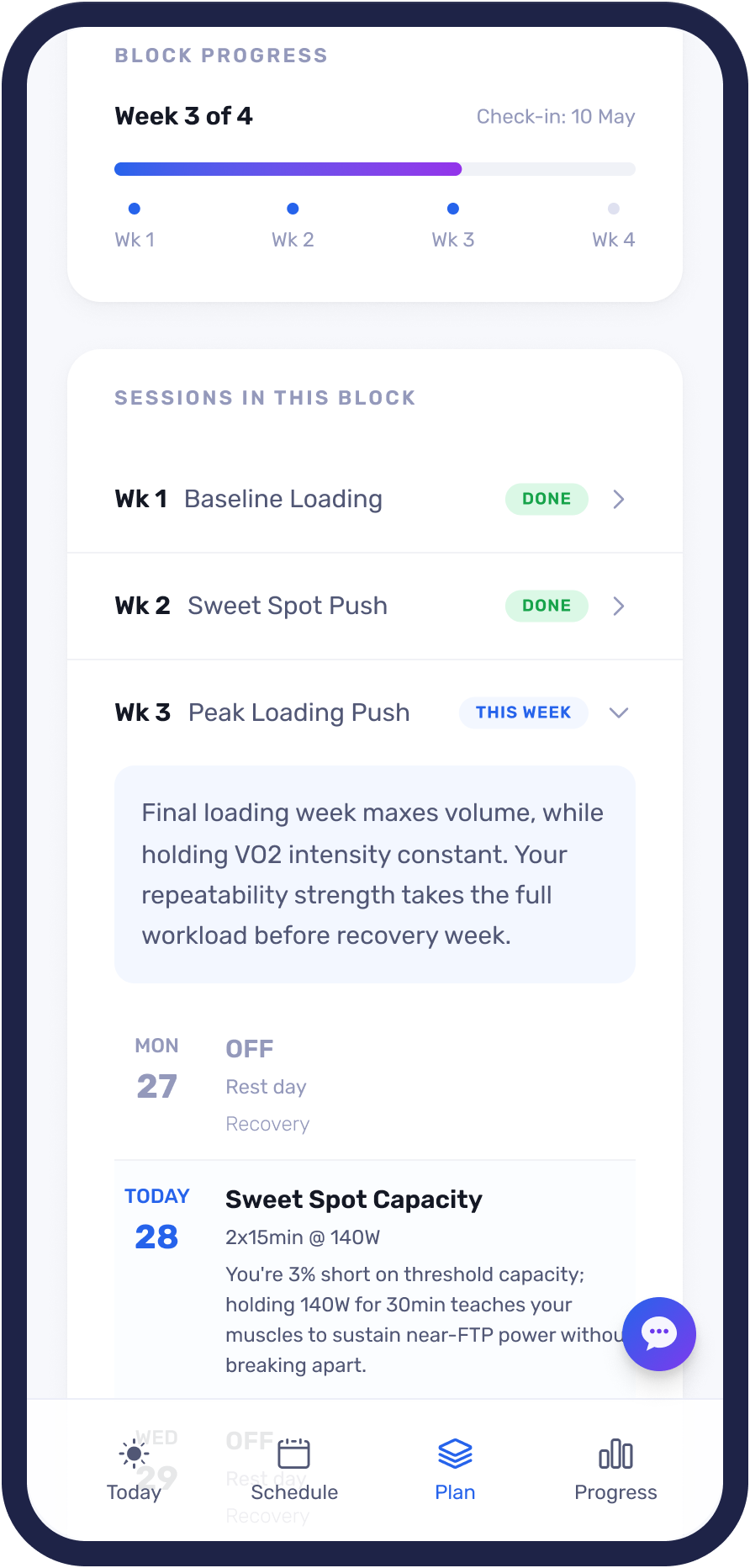

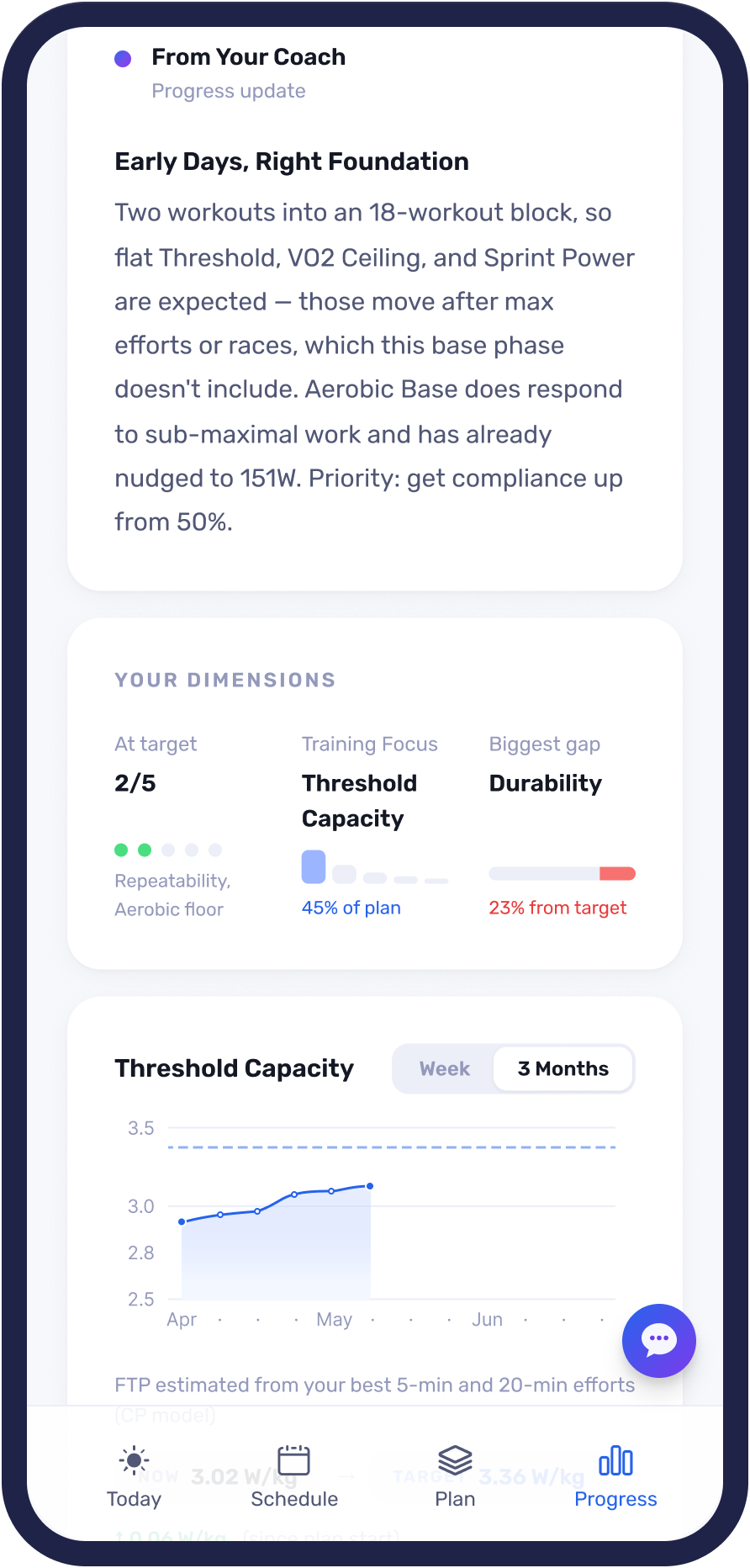

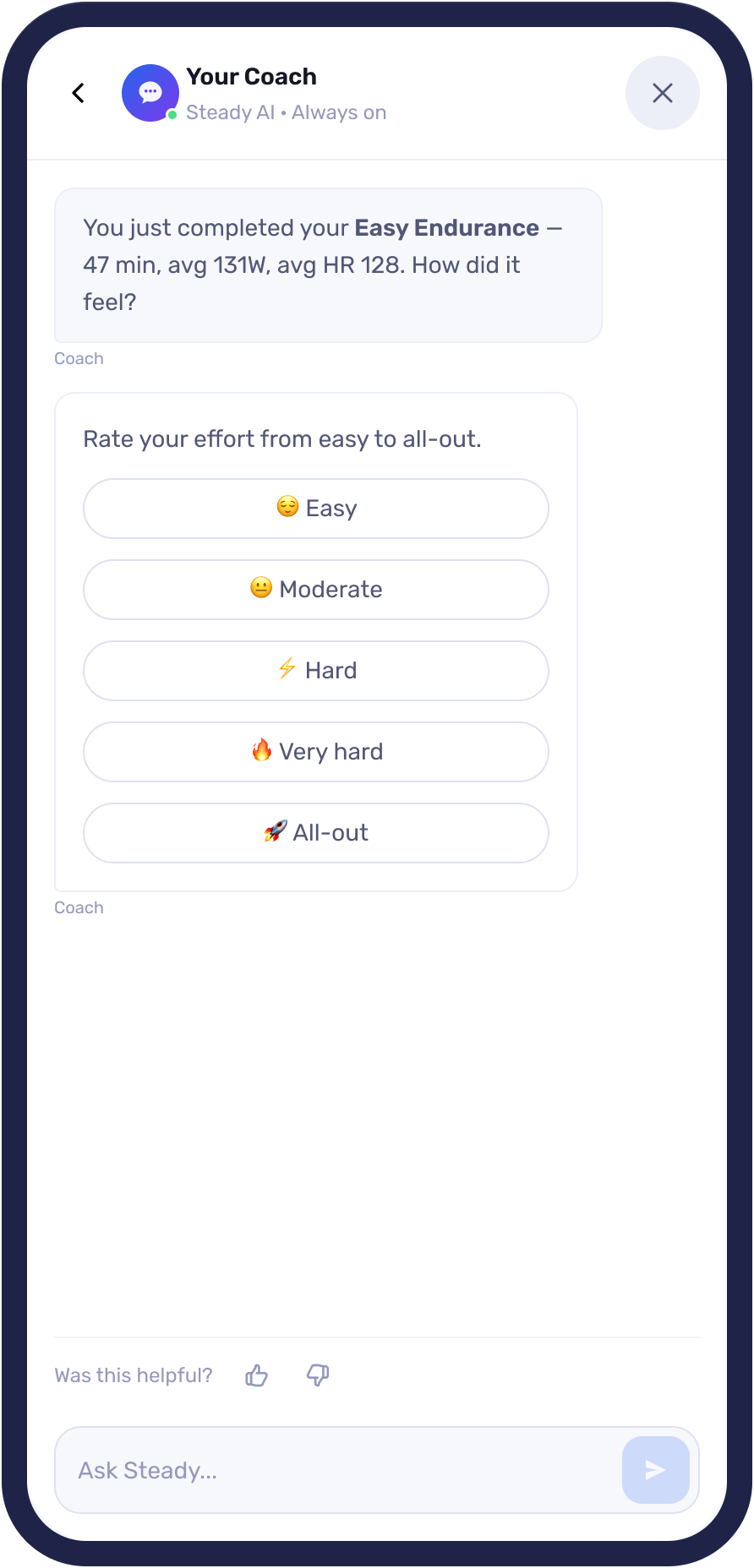

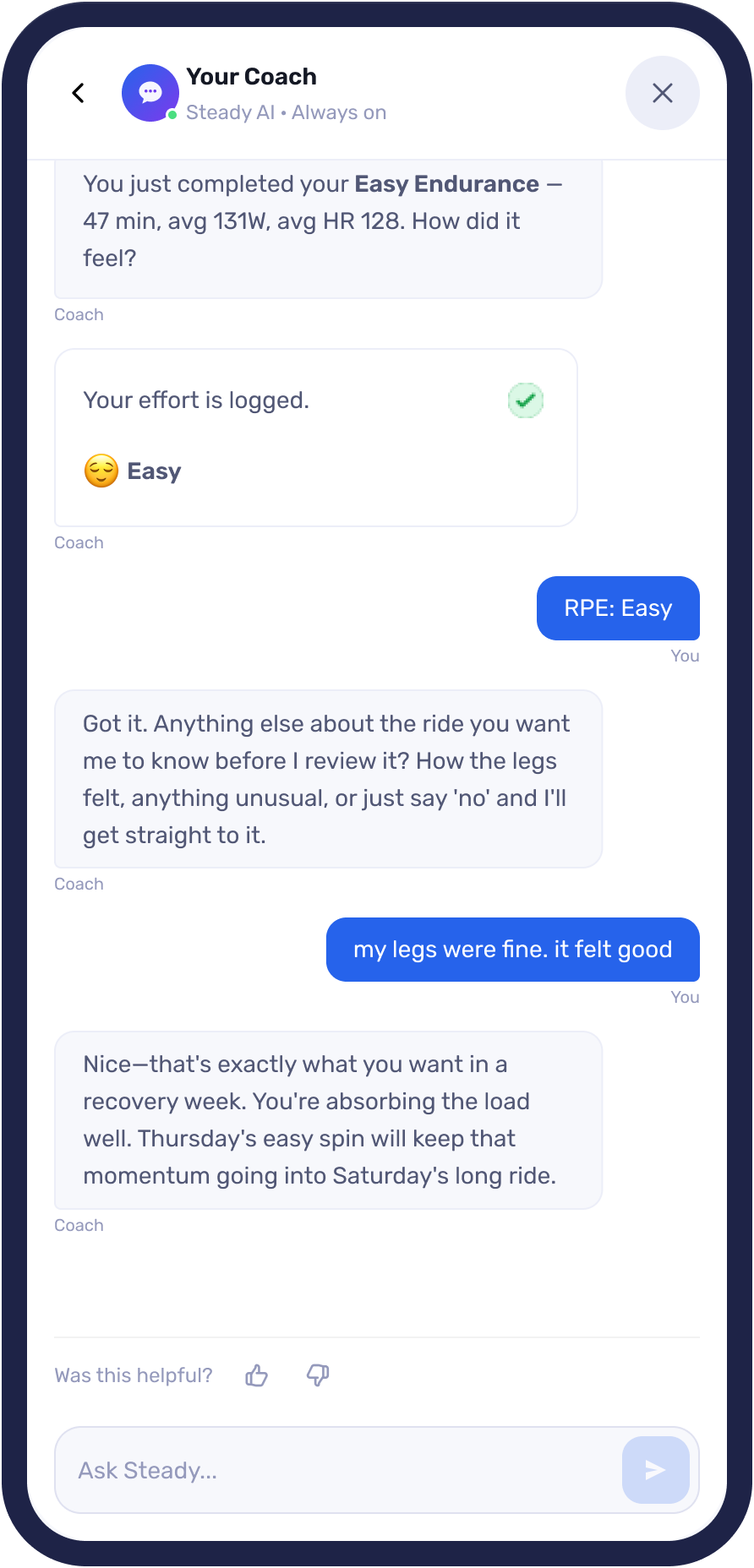

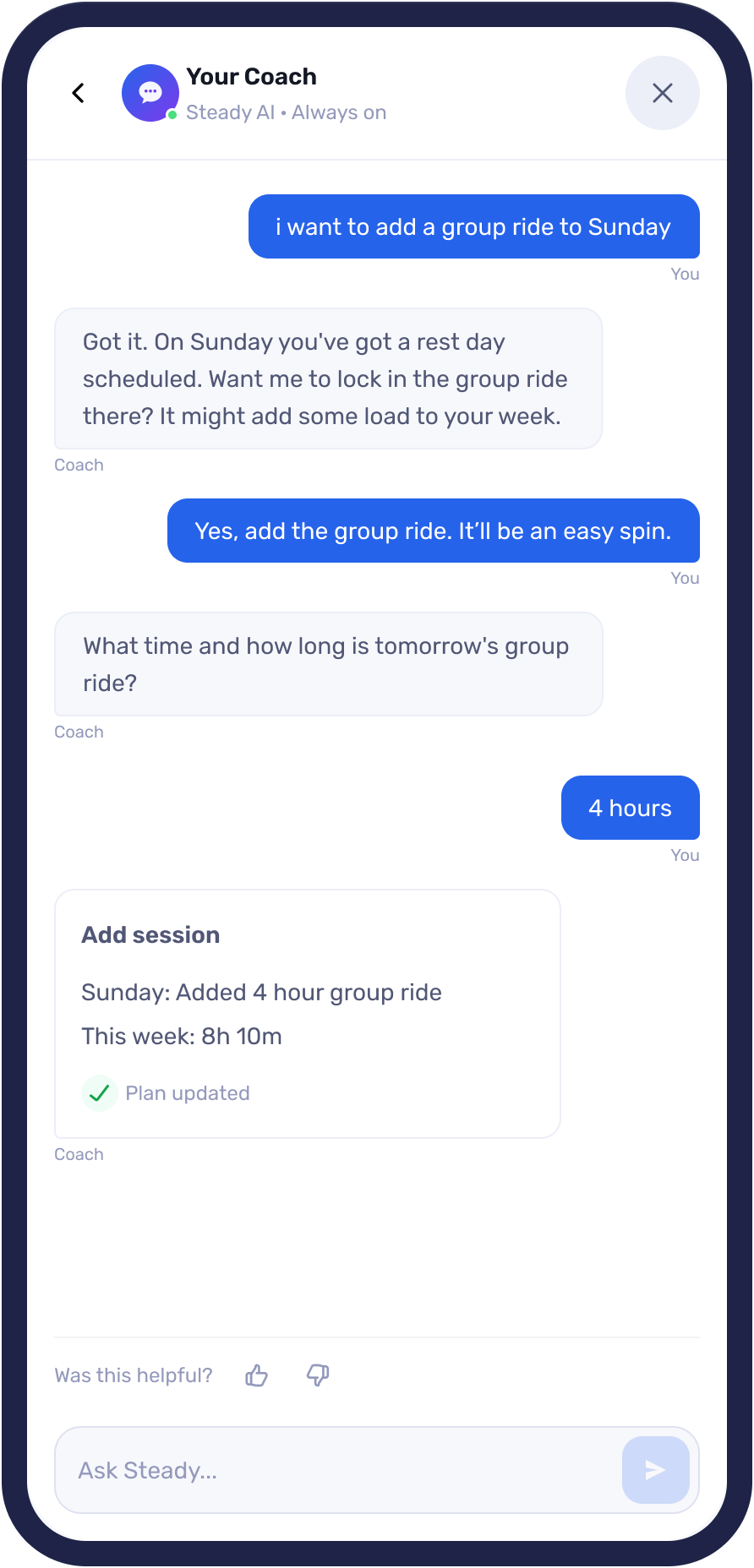

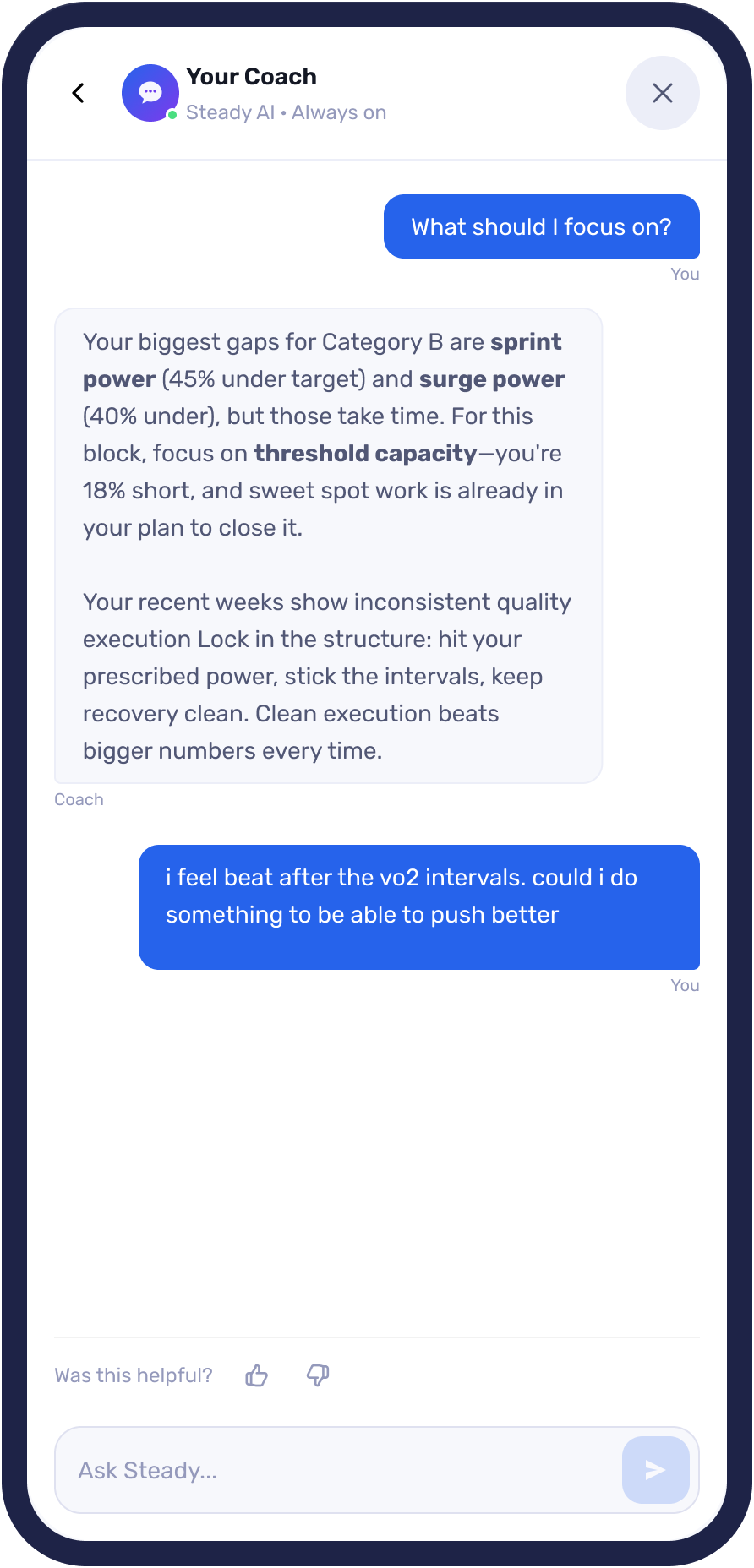

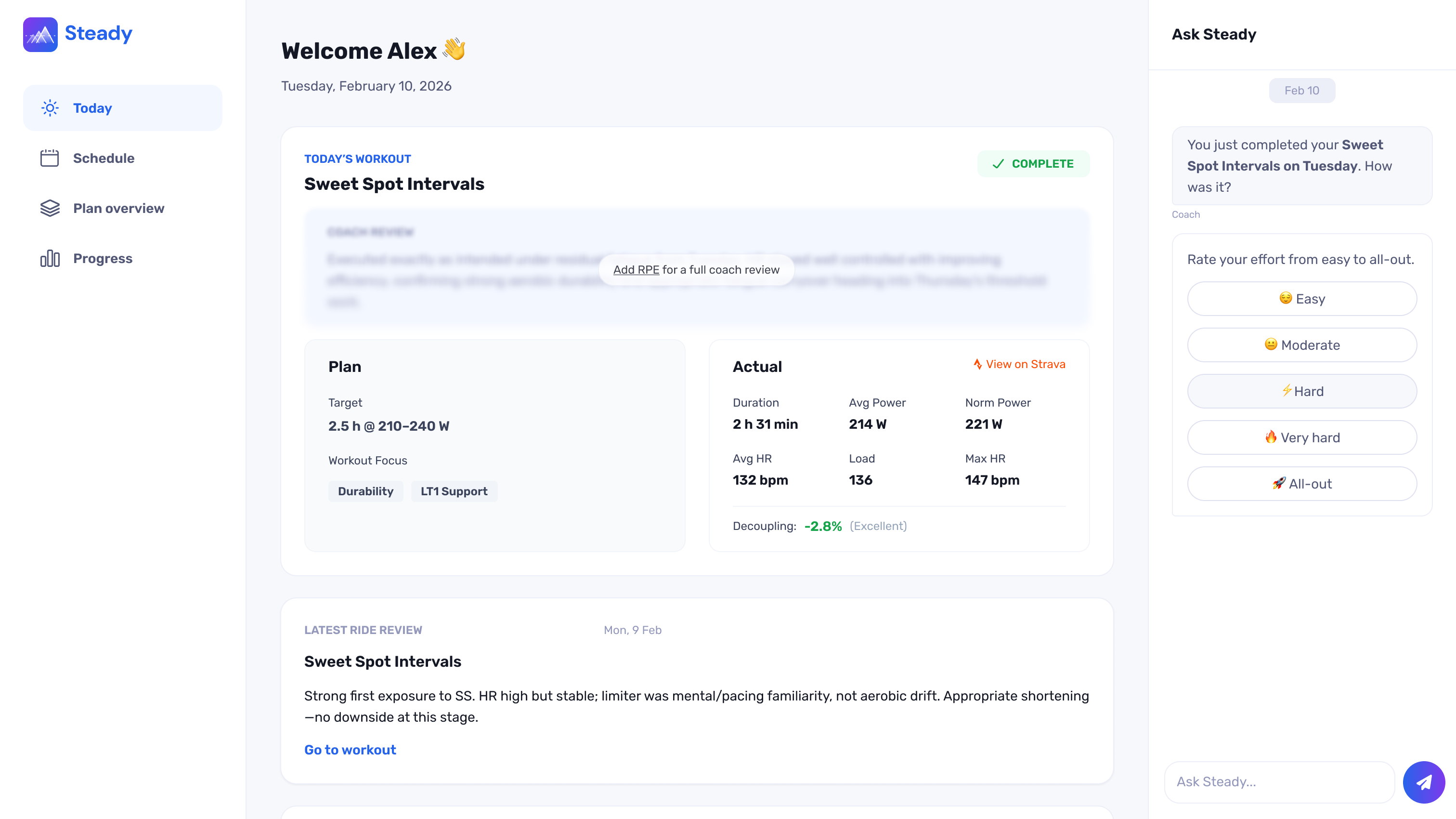

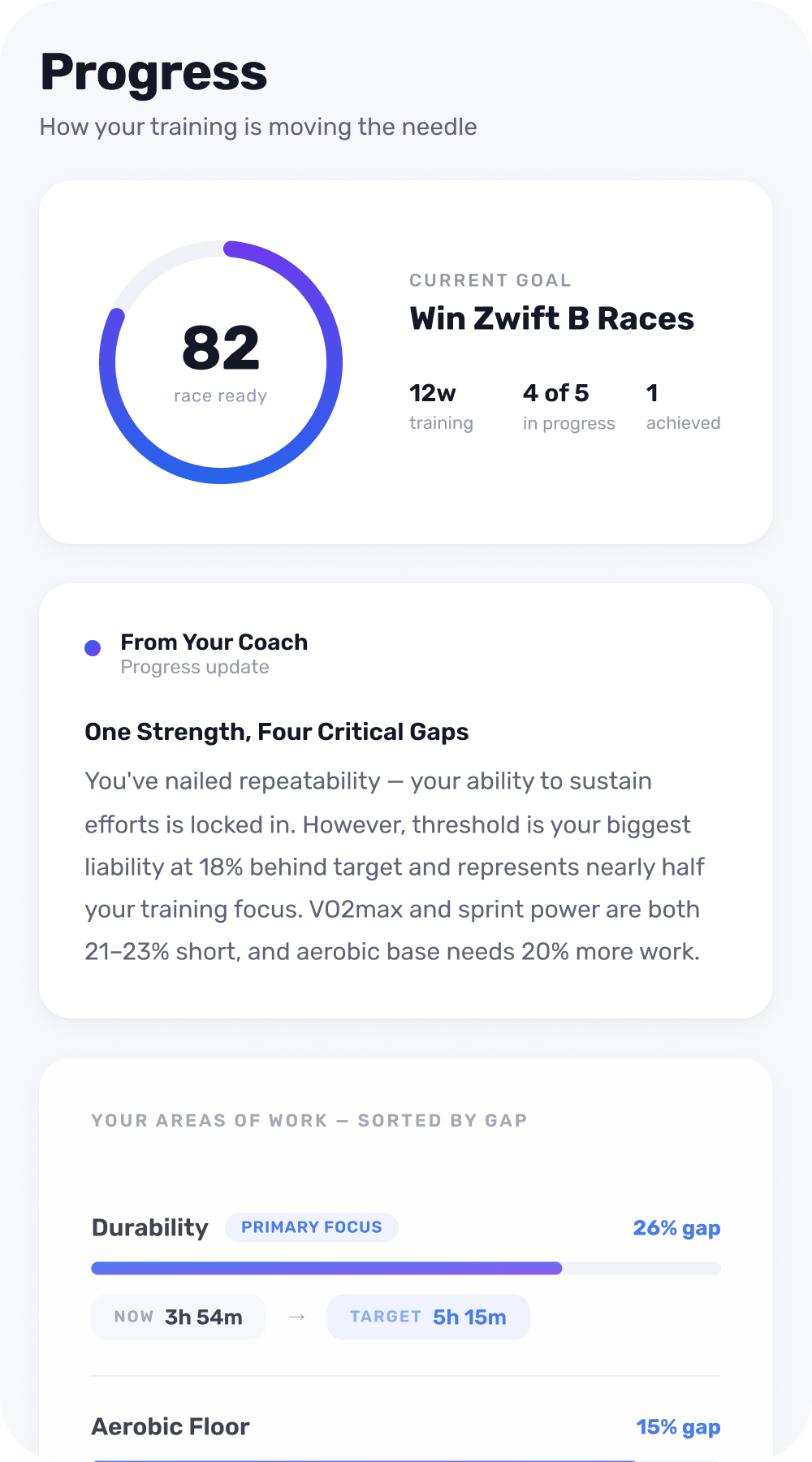

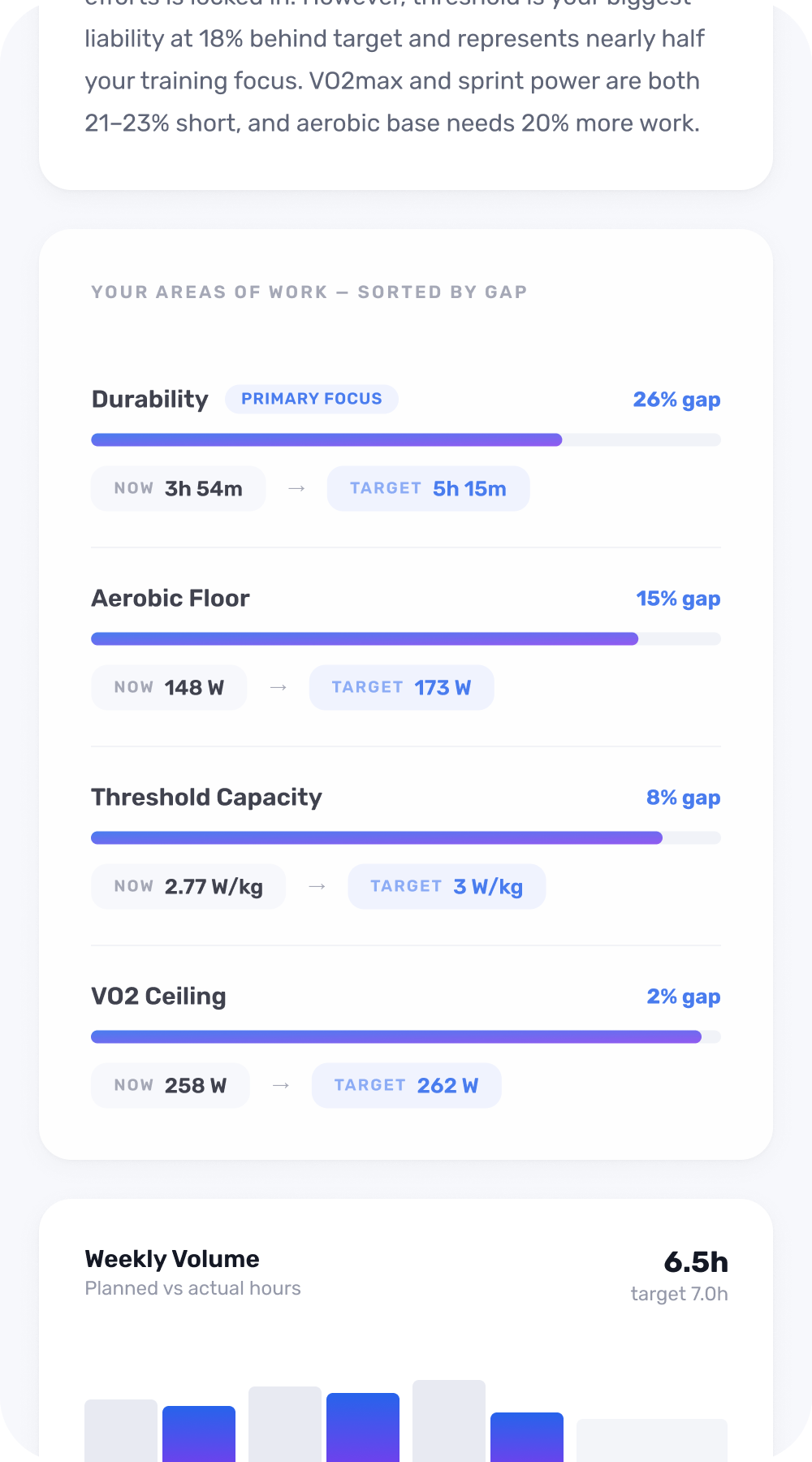

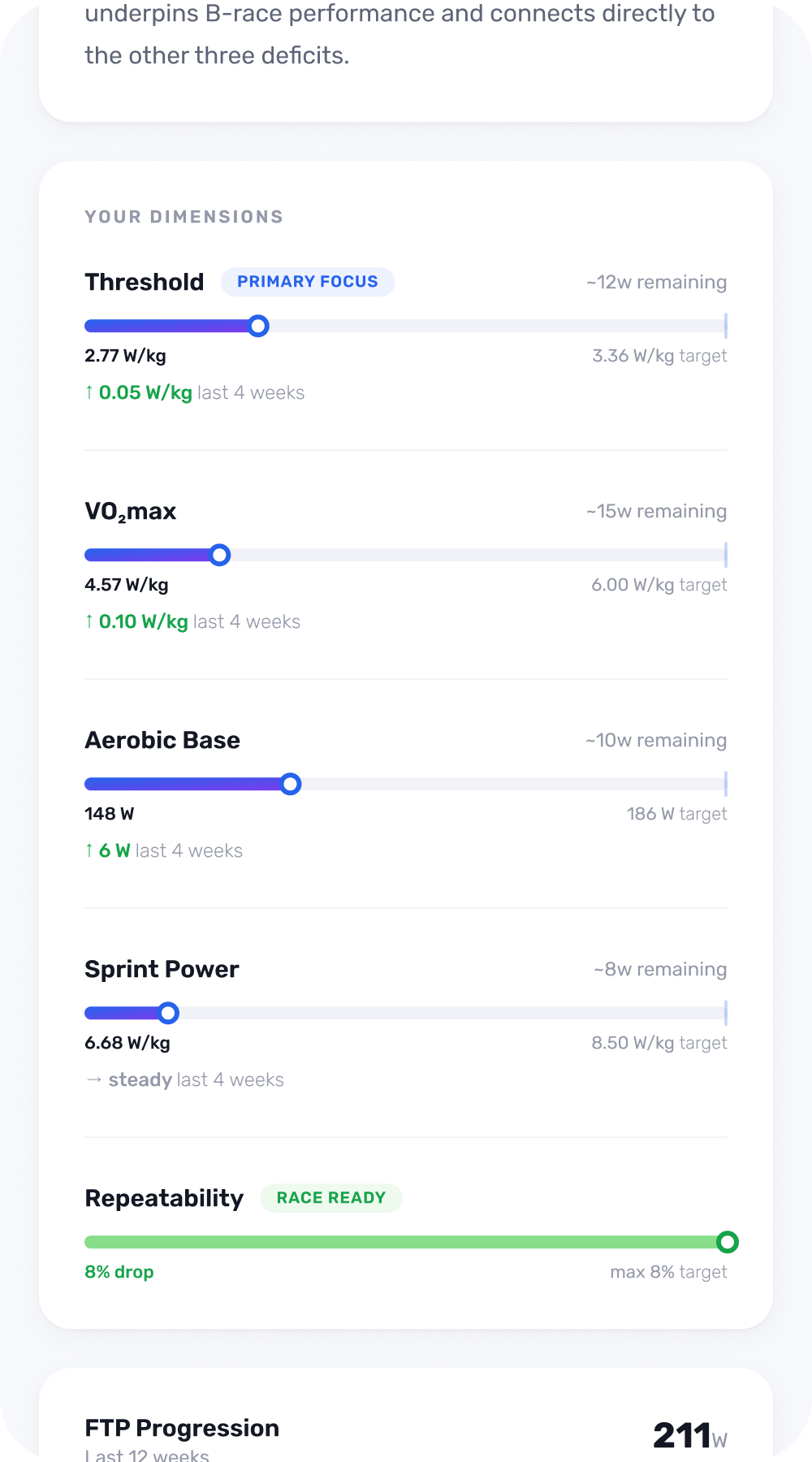

The product positioning for Steady is that it is a coaching product. The reference point for messaging and pricing is a human cycling coach, not other training apps. The app was designed to feel like a coach, rather than a training plan and a dashboard — coaching text over raw numbers, explanations over an overwhelm of data. Calm tones, minimal data, and a UI that lets the coaching text stand out.

We started with a web app to ship faster and get to real user feedback quickly. A native app will follow once we've learned enough from early usage.